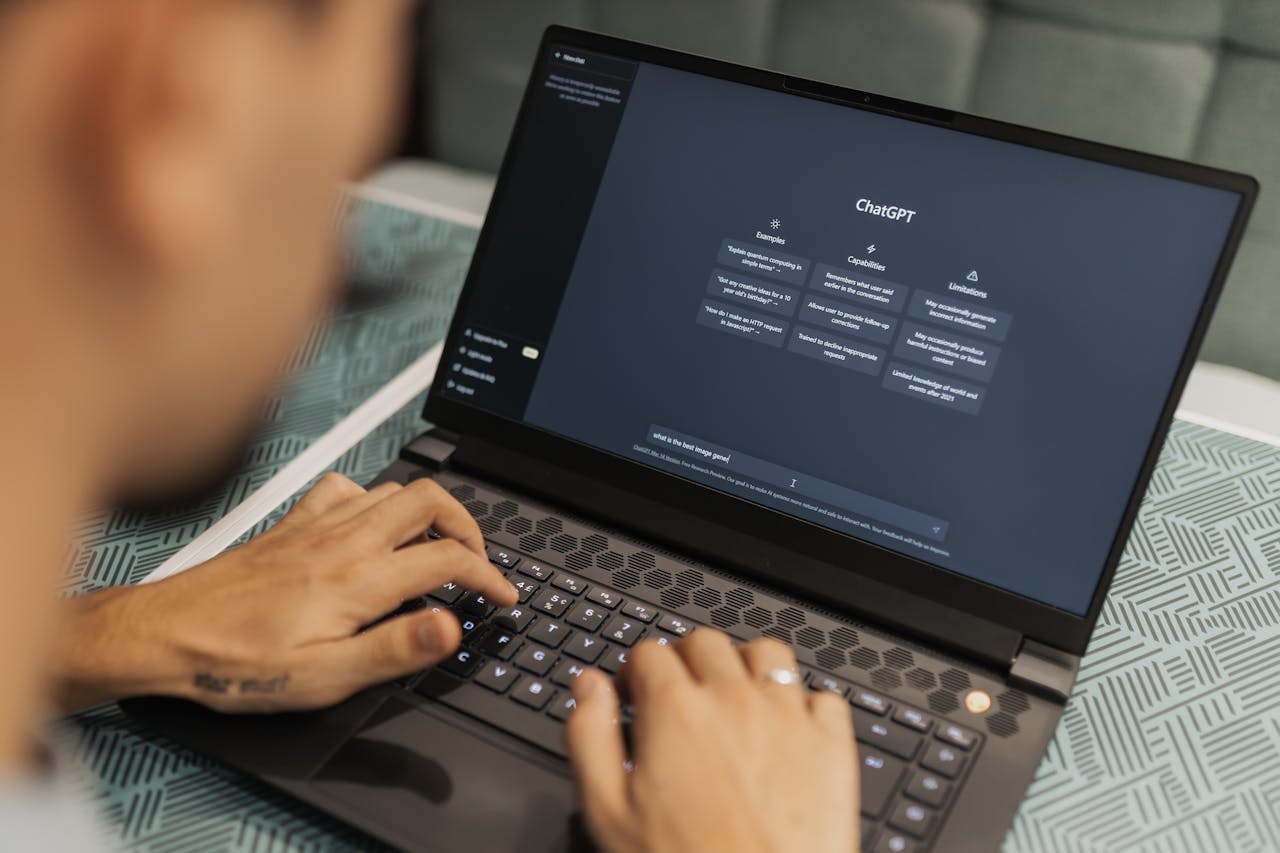

Ethical concerns surrounding artificial intelligence (AI) development and implementation have become increasingly critical as it revolutionizes industries. From bias in algorithms to the potential for mass surveillance, AI has the power to shape societies profoundly.

Leadership ensures that AI develops responsibly, aligning technological advancements with ethical principles that promote fairness, transparency, and accountability.

1. Setting a Strong Foundation

Effective leaders must establish a clear ethical framework that guides AI development within their organizations. This involves defining core values such as fairness, inclusivity, and respect for privacy.

Leaders should prioritize ethical considerations from the outset, embedding them into AI design rather than treating them as an afterthought.

2. Encouraging Diversity

Bias in AI often stems from a lack of diversity in the teams that develop it. Leaders must champion diverse hiring practices, ensuring that AI teams include individuals from various backgrounds, disciplines, and perspectives.

This diversity fosters more inclusive and equitable AI systems, reducing the likelihood of biased algorithms that disproportionately impact specific communities.

3. Implementing Transparency

Transparency is key to ethical AI. Leaders should advocate for clear AI governance policies that outline how algorithms are trained, tested, and deployed.

Openly sharing AI decision-making processes allows for greater accountability and helps build trust among stakeholders, including customers, employees, and regulatory bodies.

4. Prioritizing Accountability

One of the biggest challenges in AI ethics is the “black box” problem, when AI systems make decisions that are difficult to explain.

Ethical leadership requires a commitment to explainability, ensuring that AI outcomes are understandable and justifiable. Leaders must also establish accountability measures so organizations take responsibility for AI-driven decisions.

5. Engaging in Training

AI ethics is a rapidly evolving field, and ongoing education is essential. Leaders should implement regular ethics training for AI developers, data scientists, and decision-makers.

By fostering a culture of ethical awareness, leaders can mitigate risks before they become critical issues.

6. Collaborating with Regulators

Regulatory frameworks for AI are still developing, but proactive leaders engage with policymakers and industry peers to help shape ethical guidelines.

By participating in AI ethics committees and advocating for responsible AI regulations, leaders ensure that their organizations remain compliant while contributing to the broader ethical AI landscape.

7. Addressing AI’s Societal Impact

Beyond organizational ethics, leaders must consider the broader societal implications of AI technologies.

Ethical AI development requires assessing how AI affects employment, privacy, security, and human rights. Leaders should proactively mitigate negative impacts, ensuring that AI is a force for good rather than a tool for exploitation.

8. Promoting Human-Centric AI

Ethical AI leadership requires prioritizing human needs over efficiency gains. Leaders should ensure AI enhances human decision-making rather than replacing it entirely.

This means designing AI systems that complement human intuition, creativity, and empathy rather than diminishing them.

9. Establishing Committees

Leaders should form AI ethics review committees to ensure AI development aligns with ethical standards.

These committees, consisting of ethicists, technologists, legal experts, and community representatives, can assess AI initiatives for fairness, potential biases, and unintended consequences before deployment.

10. Encouraging Innovation

While AI presents opportunities for unprecedented innovation, responsible leadership ensures that progress does not come at the expense of ethics.

Leaders must balance innovation with ethical responsibility, resisting the temptation to push AI products to market without thorough ethical evaluation.

Mario Findlay | Contributing Writer